Over the last 2 weeks, my social feeds have become unreasonably cluttered with AI generated images, text, and even code. It’s no secret that I like and support AI artists, and really see value in the tool. However, I have some mixed feelings about the direction the technology is headed in, and dislike the knee-jerk reactions and dramatic responses some people are exhibiting in reaction to it all.

I want to clarify that while I am generally focused on the aspect of AI being used to generate art, I don’t think it’s healthy to discuss this without a dive into all facets of what AI is being used for. This series of articles will cover usage from art and music, to chatbots in different industries. I will even generate some text with ChatGPT just to demonstrate some points, and will clearly indicate it when I do. And to be clear, I do not see AI as anything other than a tool at this time, just like a hammer or a brush.

Replacements?

I understand how it feels when new technologies come out, and appear to be replacing jobs or skill sets: it creates a lot of uncertainty. This is the way of history. New advances in any field threaten all gatekeepers, especially when it sets a fire under their theories, views, and methodologies that got them where they are. Content management systems like Wordpress and Drupal wiped out previous web design methodologies in web2. And then services like Squarespace and Wix further contributed to the obsolescence of “webmasters.” The developers who adopted and stuck around, are now able to navigate most of these tools.

Another example is the early days of image manipulation software, where there was a serious concern that software would replace artists. It was also a very exciting time for those that ended up being the progenitors of modern digital graphic design. It wasn’t exactly a pleasant experience. Early iterations of CorelDraw, Photoshop, GIMP, etc were all fucking awful. Painful even. I shudder just thinking about it. Look at this shit:

Innovative technologies are often the reason for creating new industries, and anybody that has been engaged with digital media for the last 40 years can see how much things have changed. Did vending machines make the local grocery store clerks obsolete? Did digital photography kill film photography? Are video games replacing athletics? Objectively, the answer is no.

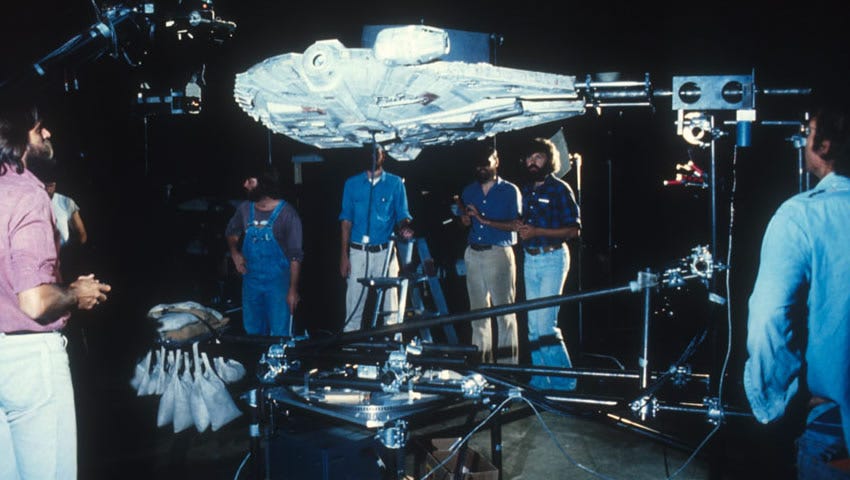

However, it is difficult to say that these developments have not changed the way we interact with the world around us. In the grand scope of history, the art world has very abruptly come to accept digital art as a valid medium. Computer generated graphics have come a long way, and most folks don’t even question whether something in a Hollywood action movie is even real or not. The Colin Cantwell and Jim Henson days of Star Wars are long gone… HOWEVER, people still greatly appreciate “analog” arts! In fact, I will argue that traditional art (and even special FX) has gained value just by not being digitally created. People still buy physical Star Wars collectibles like crazy!

Without harping on this too hard just yet, I want to emphasize the obvious point here: many artists adopted new tools, and developed new skill sets along the way. This is where new industries were not just born, but generational shifts into digital realms took place. As a photographer, I fucking LOVE digital photography, because instead of having to deal with chemicals and developing film, I can bop around in Lightroom and Photoshop for a bit - and not at all, if I got the camera settings right through its fancy digital UI.

That being said, I still love film photography better than any digital stuff I see, and nothing will probably change this. If you’ve been working in a medium long enough, you know how easy it gets to distinguish between formats when you see them at first glance. This is how I feel with film - I know it when I see it, and it will be infinitely more valuable to me than any digital photography not just because of what goes into it, but because it just feels a certain way to me.

Actual Concerns with AI

I’m not trying to compare past software developments to AI on a 1:1 basis. That’s ludicrous. When I think of AI, I think of how this is a technology that is changing all facets of the world. To understand the gravity of this statement in the context of AI, one really has to get a grip on what the fuck people are actually doing with AI.

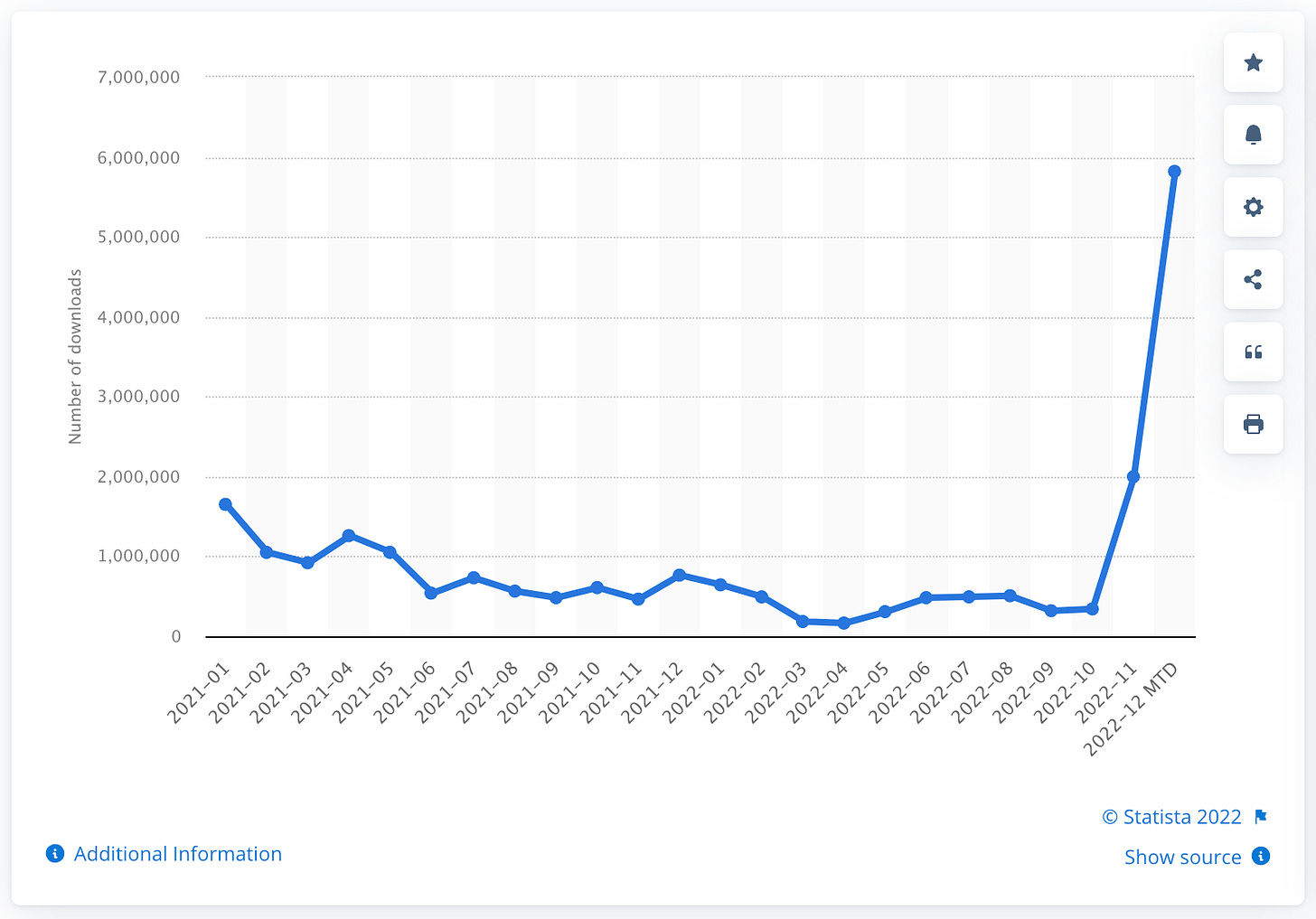

You think all those Lensa generated portraits on Facebook and Instagram are the bulk of what people are doing out there with it? Good lord. OK, so here are some statistics on Lensa app downloads from January 2021 till now:

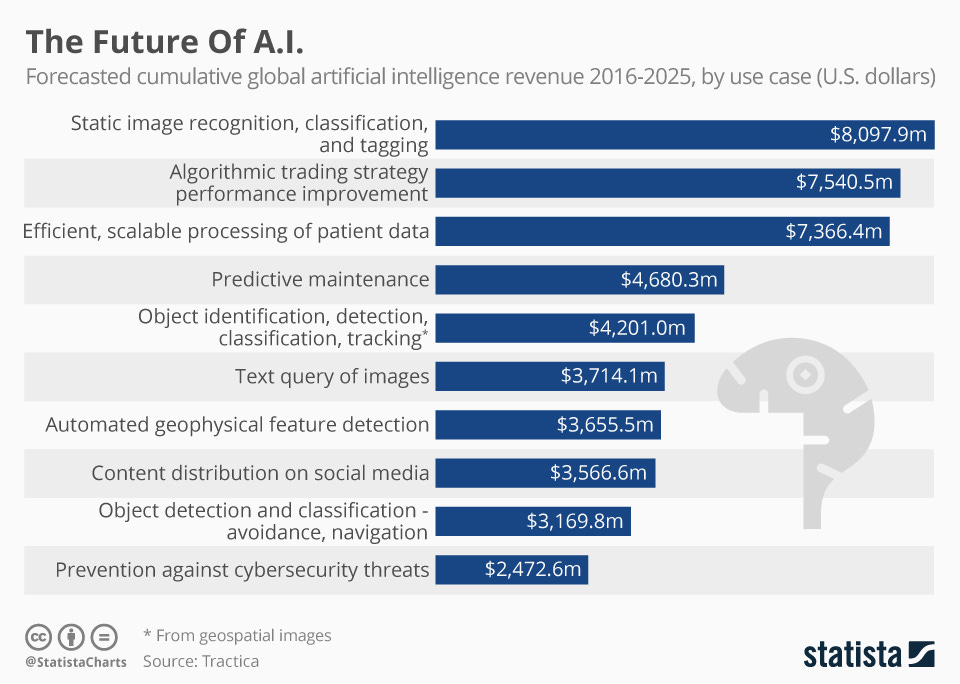

Wild, right? Almost 6 million folks have downloaded the app and begun dumping a bunch of portraits of themselves across social media. Well, that’s just a free app. Here are some numbers on how much folks have been spending (and are predicted to spend) on AI across different industries. This kind of data lays out some uncomfortable truths about how little AI can’t do. Portraits probably make up a single digit of the % of what AI is being used for.

I would say the main concern with AI is simply that we have no fucking clue what we’re in for. Therapy chatbots. SecOps decisions from AI predictions. Fintech AIs moving trillions around autonomously. AI design & 3D printing for optimized architecture. There are quite a few things that are happening that we can’t fully comprehend, and have no idea how things will be regulated until the ancient rotting semi-sentient jarred farts that make our laws give up their seats to folks who actually speak the language of innovation and tech.

We already have this problem with crypto - 90% of the folks writing regulation have no idea how it works. Now picture some of these scaly archaic pissants trying to figure out how to handle copyright laws when it comes to datasets. You better believe they will tip their hats to the companies investing the most money.

Problems & Solutions

As Artists of all mediums, we are experiencing an overwhelming influx of AI art. We are threatened by automation when it comes to commissions/hires, as people use AI to generate art for their publications, marketing projects, and general personal use. We are also concerned about our provenance, and how there aren’t really any effective regulations in place that prevent people from training their AIs with art from artists that did not consent to being used in datasets. It’s a form of plagiarism that is so new to us, that it feels like we have all been caught with our pants down.

I want to re-iterate a personal opinion here, and how this might be an opportunity for those that are deeply dedicated to their craft. As we see an over-saturation of AI generated art flooding markets, non-AI creations will increase in value. The important thing that artists will have to adapt, is showing proof-of-work. Ironic, right? Not in a blockchain sense (Ethereum just recently moved over to proof-of-stake LOL), but in the sense of living in an age of social media that demands artists learn to market themselves. It was already tough, but now everybody else has to get on our level of difficulty. If anything, this may force the quality of art to increase.

Most artists know the value of WIPs, and process videos; folks love these. And in a sense, it not only shows how much work goes into making art; it answers many questions without the artist having to answer 500 questions about how they made something. I love a good AMA, but fucking hell is it exhausting after the first few times. Here’s a process video post from when I dropped my first piece on Makersplace, back in September. Proof it was not an AI that made it!

From a practical perspective, it makes sense that a suite of tools that have problematic consequences, will inevitably have tools created to pair with them.

My father always used car analogies to explain everything - they don’t always work, but in this case I think it is a useful allegory. In 1769, a fellow named Nicolas-Joseph Cugnot created the first ever self-propelled road vehicle. It was a steam powered, 3-wheeled, military tractor-like contraption. Since then, automobiles have gone through many variations, and ultimately arrived at what we all think of today, when somebody says “car!” 🚗💨

These things can seriously harm and even kill you, so it’s regulated on multiple levels.

Usage requires you have a license to drive one, which requires training. This will also teach you to anticipate and adapt to dangerous conditions out of your control, like idiots walking into traffic, and inclement weather.

Automobile fabrication standards prioritize safety; seat belts, airbags, emergency braking systems, and even speed governors/limiters are mandatory.

Roads and surrounding infrastructure has evolved design standards to ensure there are significant multi-sensory devices and barriers to prevent living beings from getting in front of 4 ton objects traveling at high speeds. DON’T WALK.

Now, as on-board electronics and computers have grown in complexity, we have officially arrived at self-driving cars. The primary proposition for these have included the notion that handing control over to robots is safer, since they will never get tired, be subject to distractions, get inebriated, etc. But we still need to feed the vehicle fuel/energy, take it in for maintenance/checkups, and so on. The machines are not quite yet fully autonomous from us.

All of this to say, in the case of AI generated content, we are most likely going to see the rise of tools to combat fake content, establish authenticity human-made authenticity, and probably even some mechanisms for limiting copyrighted content from being displayed across certain media types.

As an example, the Allen Institute for Artificial Intelligence (AI2) responded to expressed concern over neural fake news with a tool to detect it, called Grover. If we are experiencing a serious misinformation problem, think about what that means once you can prove something is not fake! Now that’s a form of valuation I can get behind.

Society will always lean heavily into making things safer - but what that means right now is really, really vague when it comes to AI. Some are even arguing that AI exists to make things safer in the first place. I think that as artists and designers, we have a lot of work to do to show what putting intention into our art means, and why its important in the context of AI. It’s already hard enough trying to explain this to people without AI being a part of the topic.

Part II of this series is going to get a little more technical. I will touch on OpenAI vs DeepMind, and also take a closer look at GPT-1/2/3 for generative language modeling. In Part III, I’m going to go ham on the art discussion.

Got any insights about all of this? Voice your opinion!

Speaking of which:

So uh yeah, subscribe, share, and let the world know I’m a real human.

My 1 on 1 interview with Flashfox drops this weekend!

bleep bloop beep.